Note

Click here to download the full example code

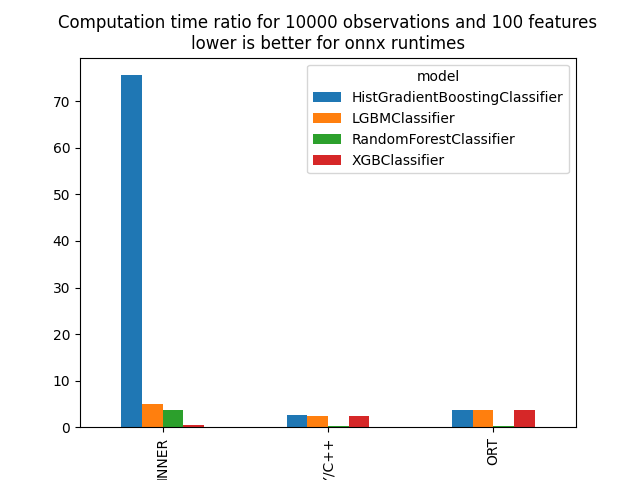

Benchmark Random Forests, Tree Ensemble#

The following script benchmarks different libraries implementing random forests and boosting trees. This benchmark can be replicated by installing the following packages:

python -m virtualenv env

cd env

pip install -i https://test.pypi.org/simple/ ort-nightly

pip install git+https://github.com/microsoft/onnxconverter-common.git@jenkins

pip install git+https://https://github.com/xadupre/sklearn-onnx.git@jenkins

pip install mlprodict matplotlib scikit-learn pandas threadpoolctl

pip install mlprodict lightgbm xgboost jinja2

Import#

import os

import pickle

from pprint import pprint

import numpy

import pandas

import matplotlib.pyplot as plt

from xgboost import XGBClassifier

from lightgbm import LGBMClassifier

from onnxruntime import InferenceSession

from sklearn.ensemble import HistGradientBoostingClassifier

from sklearn.ensemble import RandomForestClassifier

from sklearn.datasets import make_classification

from skl2onnx import to_onnx

from mlprodict.onnx_conv import register_converters

from mlprodict.onnxrt.validate.validate_helper import measure_time

from mlprodict.onnxrt import OnnxInference

Registers new converters for sklearn-onnx.

register_converters()

Out:

[<class 'lightgbm.sklearn.LGBMClassifier'>, <class 'lightgbm.sklearn.LGBMRegressor'>, <class 'lightgbm.basic.Booster'>, <class 'mlprodict.onnx_conv.operator_converters.parse_lightgbm.WrappedLightGbmBooster'>, <class 'mlprodict.onnx_conv.operator_converters.parse_lightgbm.WrappedLightGbmBoosterClassifier'>, <class 'xgboost.sklearn.XGBClassifier'>, <class 'xgboost.sklearn.XGBRegressor'>, <class 'mlinsights.mlmodel.transfer_transformer.TransferTransformer'>, <class 'skl2onnx.sklapi.woe_transformer.WOETransformer'>, <class 'mlprodict.onnx_conv.scorers.register.CustomScorerTransform'>]

Problem#

max_depth = 7

n_classes = 20

n_estimators = 500

n_features = 100

REPEAT = 3

NUMBER = 1

train, test = 1000, 10000

print('dataset')

X_, y_ = make_classification(n_samples=train + test, n_features=n_features,

n_classes=n_classes, n_informative=n_features - 3)

X_ = X_.astype(numpy.float32)

y_ = y_.astype(numpy.int64)

X_train, X_test = X_[:train], X_[train:]

y_train, y_test = y_[:train], y_[train:]

compilation = []

def train_cache(model, X_train, y_train, max_depth, n_estimators, n_classes):

name = "cache-{}-N{}-f{}-d{}-e{}-cl{}.pkl".format(

model.__class__.__name__, X_train.shape[0], X_train.shape[1],

max_depth, n_estimators, n_classes)

if os.path.exists(name):

with open(name, 'rb') as f:

return pickle.load(f)

else:

model.fit(X_train, y_train)

with open(name, 'wb') as f:

pickle.dump(model, f)

return model

Out:

dataset

RandomForestClassifier#

rf = RandomForestClassifier(n_estimators=n_estimators, max_depth=max_depth)

print('train')

rf = train_cache(rf, X_train, y_train, max_depth, n_estimators, n_classes)

res = measure_time(rf.predict_proba, X_test[:10],

repeat=REPEAT, number=NUMBER,

div_by_number=True, first_run=True)

res['model'], res['runtime'] = rf.__class__.__name__, 'INNER'

pprint(res)

Out:

train

{'average': 0.24231497757136822,

'context_size': 64,

'deviation': 0.0007494085931751449,

'max_exec': 0.2432593386620283,

'min_exec': 0.24142619594931602,

'model': 'RandomForestClassifier',

'number': 1,

'repeat': 3,

'runtime': 'INNER',

'ttime': 0.7269449327141047}

ONNX#

def measure_onnx_runtime(model, xt, repeat=REPEAT, number=NUMBER,

verbose=True):

if verbose:

print(model.__class__.__name__)

res = measure_time(model.predict_proba, xt,

repeat=repeat, number=number,

div_by_number=True, first_run=True)

res['model'], res['runtime'] = model.__class__.__name__, 'INNER'

res['N'] = X_test.shape[0]

res["max_depth"] = max_depth

res["n_estimators"] = n_estimators

res["n_features"] = n_features

if verbose:

pprint(res)

yield res

onx = to_onnx(model, X_train[:1], options={id(model): {'zipmap': False}})

oinf = OnnxInference(onx)

res = measure_time(lambda x: oinf.run({'X': x}), xt,

repeat=repeat, number=number,

div_by_number=True, first_run=True)

res['model'], res['runtime'] = model.__class__.__name__, 'NPY/C++'

res['N'] = X_test.shape[0]

res['size'] = len(onx.SerializeToString())

res["max_depth"] = max_depth

res["n_estimators"] = n_estimators

res["n_features"] = n_features

if verbose:

pprint(res)

yield res

sess = InferenceSession(onx.SerializeToString())

res = measure_time(lambda x: sess.run(None, {'X': x}), xt,

repeat=repeat, number=number,

div_by_number=True, first_run=True)

res['model'], res['runtime'] = model.__class__.__name__, 'ORT'

res['N'] = X_test.shape[0]

res['size'] = len(onx.SerializeToString())

res["max_depth"] = max_depth

res["n_estimators"] = n_estimators

res["n_features"] = n_features

if verbose:

pprint(res)

yield res

compilation.extend(list(measure_onnx_runtime(rf, X_test)))

Out:

RandomForestClassifier

{'N': 10000,

'average': 3.7787221440424523,

'context_size': 64,

'deviation': 0.001190284858433674,

'max_depth': 7,

'max_exec': 3.7804053463041782,

'min_exec': 3.777863522991538,

'model': 'RandomForestClassifier',

'n_estimators': 500,

'n_features': 100,

'number': 1,

'repeat': 3,

'runtime': 'INNER',

'ttime': 11.336166432127357}

/var/lib/jenkins/workspace/mlprodict/mlprodict_UT_39_std/_venv/lib/python3.9/site-packages/sklearn/utils/deprecation.py:103: FutureWarning: The attribute `n_features_` is deprecated in 1.0 and will be removed in 1.2. Use `n_features_in_` instead.

warnings.warn(msg, category=FutureWarning)

/var/lib/jenkins/workspace/mlprodict/mlprodict_UT_39_std/_venv/lib/python3.9/site-packages/sklearn/utils/deprecation.py:103: FutureWarning: Attribute `n_features_` was deprecated in version 1.0 and will be removed in 1.2. Use `n_features_in_` instead.

warnings.warn(msg, category=FutureWarning)

{'N': 10000,

'average': 0.27570024070640403,

'context_size': 64,

'deviation': 0.016283346786245417,

'max_depth': 7,

'max_exec': 0.29407341964542866,

'min_exec': 0.25449113920331,

'model': 'RandomForestClassifier',

'n_estimators': 500,

'n_features': 100,

'number': 1,

'repeat': 3,

'runtime': 'NPY/C++',

'size': 7181758,

'ttime': 0.8271007221192122}

{'N': 10000,

'average': 0.3183505417158206,

'context_size': 64,

'deviation': 0.0017531878412634144,

'max_depth': 7,

'max_exec': 0.32066284492611885,

'min_exec': 0.3164195083081722,

'model': 'RandomForestClassifier',

'n_estimators': 500,

'n_features': 100,

'number': 1,

'repeat': 3,

'runtime': 'ORT',

'size': 7181758,

'ttime': 0.9550516251474619}

HistGradientBoostingClassifier#

hist = HistGradientBoostingClassifier(

max_iter=n_estimators, max_depth=max_depth)

print('train')

hist = train_cache(hist, X_train, y_train, max_depth, n_estimators, n_classes)

compilation.extend(list(measure_onnx_runtime(hist, X_test)))

Out:

train

HistGradientBoostingClassifier

{'N': 10000,

'average': 75.59624004301925,

'context_size': 64,

'deviation': 6.831427574475106,

'max_depth': 7,

'max_exec': 85.12977449037135,

'min_exec': 69.47433638200164,

'model': 'HistGradientBoostingClassifier',

'n_estimators': 500,

'n_features': 100,

'number': 1,

'repeat': 3,

'runtime': 'INNER',

'ttime': 226.78872012905777}

{'N': 10000,

'average': 2.525462493300438,

'context_size': 64,

'deviation': 0.035264337777219594,

'max_depth': 7,

'max_exec': 2.571142975240946,

'min_exec': 2.4852922074496746,

'model': 'HistGradientBoostingClassifier',

'n_estimators': 500,

'n_features': 100,

'number': 1,

'repeat': 3,

'runtime': 'NPY/C++',

'size': 4250643,

'ttime': 7.576387479901314}

{'N': 10000,

'average': 3.67889177861313,

'context_size': 64,

'deviation': 0.01841670566439024,

'max_depth': 7,

'max_exec': 3.7049297392368317,

'min_exec': 3.66534267924726,

'model': 'HistGradientBoostingClassifier',

'n_estimators': 500,

'n_features': 100,

'number': 1,

'repeat': 3,

'runtime': 'ORT',

'size': 4250643,

'ttime': 11.03667533583939}

LightGBM#

lgb = LGBMClassifier(n_estimators=n_estimators,

max_depth=max_depth, pred_early_stop=False)

print('train')

lgb = train_cache(lgb, X_train, y_train, max_depth, n_estimators, n_classes)

compilation.extend(list(measure_onnx_runtime(lgb, X_test)))

Out:

train

LGBMClassifier

{'N': 10000,

'average': 5.080586918319265,

'context_size': 64,

'deviation': 0.10515529005691854,

'max_depth': 7,

'max_exec': 5.226491708308458,

'min_exec': 4.982728766277432,

'model': 'LGBMClassifier',

'n_estimators': 500,

'n_features': 100,

'number': 1,

'repeat': 3,

'runtime': 'INNER',

'ttime': 15.241760754957795}

{'N': 10000,

'average': 2.4445161360005536,

'context_size': 64,

'deviation': 0.03226191485108823,

'max_depth': 7,

'max_exec': 2.4900894574820995,

'min_exec': 2.419845065101981,

'model': 'LGBMClassifier',

'n_estimators': 500,

'n_features': 100,

'number': 1,

'repeat': 3,

'runtime': 'NPY/C++',

'size': 4470224,

'ttime': 7.333548408001661}

{'N': 10000,

'average': 3.6921193643162646,

'context_size': 64,

'deviation': 0.01073454246095909,

'max_depth': 7,

'max_exec': 3.7057488691061735,

'min_exec': 3.679514702409506,

'model': 'LGBMClassifier',

'n_estimators': 500,

'n_features': 100,

'number': 1,

'repeat': 3,

'runtime': 'ORT',

'size': 4470224,

'ttime': 11.076358092948794}

XGBoost#

xgb = XGBClassifier(n_estimators=n_estimators, max_depth=max_depth)

print('train')

xgb = train_cache(xgb, X_train, y_train, max_depth, n_estimators, n_classes)

compilation.extend(list(measure_onnx_runtime(xgb, X_test)))

Out:

train

/usr/local/lib/python3.9/site-packages/xgboost/sklearn.py:1224: UserWarning: The use of label encoder in XGBClassifier is deprecated and will be removed in a future release. To remove this warning, do the following: 1) Pass option use_label_encoder=False when constructing XGBClassifier object; and 2) Encode your labels (y) as integers starting with 0, i.e. 0, 1, 2, ..., [num_class - 1].

warnings.warn(label_encoder_deprecation_msg, UserWarning)

[03:46:45] WARNING: ../src/learner.cc:1115: Starting in XGBoost 1.3.0, the default evaluation metric used with the objective 'multi:softprob' was changed from 'merror' to 'mlogloss'. Explicitly set eval_metric if you'd like to restore the old behavior.

XGBClassifier

{'N': 10000,

'average': 0.3845494327445825,

'context_size': 64,

'deviation': 0.010922676092145369,

'max_depth': 7,

'max_exec': 0.39922153018414974,

'min_exec': 0.37302955240011215,

'model': 'XGBClassifier',

'n_estimators': 500,

'n_features': 100,

'number': 1,

'repeat': 3,

'runtime': 'INNER',

'ttime': 1.1536482982337475}

{'N': 10000,

'average': 2.4073323383927345,

'context_size': 64,

'deviation': 0.057782280283436495,

'max_depth': 7,

'max_exec': 2.457572612911463,

'min_exec': 2.326398905366659,

'model': 'XGBClassifier',

'n_estimators': 500,

'n_features': 100,

'number': 1,

'repeat': 3,

'runtime': 'NPY/C++',

'size': 1513158,

'ttime': 7.221997015178204}

{'N': 10000,

'average': 3.5923110395669937,

'context_size': 64,

'deviation': 0.02030886910850649,

'max_depth': 7,

'max_exec': 3.60942854732275,

'min_exec': 3.563779342919588,

'model': 'XGBClassifier',

'n_estimators': 500,

'n_features': 100,

'number': 1,

'repeat': 3,

'runtime': 'ORT',

'size': 1513158,

'ttime': 10.776933118700981}

Summary#

All data

name = 'plot_time_tree_ensemble'

df = pandas.DataFrame(compilation)

df.to_csv('%s.csv' % name, index=False)

df.to_excel('%s.xlsx' % name, index=False)

df

Time per model and runtime.

piv = df.pivot("model", "runtime", "average")

piv

Graphs.

ax = piv.T.plot(kind="bar")

ax.set_title("Computation time ratio for %d observations and %d features\n"

"lower is better for onnx runtimes" % X_test.shape)

plt.savefig('%s.png' % name)

Available optimisation on this machine:

from mlprodict.testing.experimental_c_impl.experimental_c import code_optimisation

print(code_optimisation())

plt.show()

Out:

AVX-omp=8

Total running time of the script: ( 39 minutes 30.091 seconds)