Note

Click here to download the full example code

Compares implementations of ReduceMax#

This example compares the numpy for the operator ReduceMax to onnxruntime implementation. If available, tensorflow and pytorch are included as well.

Available optimisation#

The code shows which parallelisation optimisation could be used, AVX or SSE and the number of available processors.

import numpy

import pandas

import matplotlib.pyplot as plt

from onnxruntime import InferenceSession

from skl2onnx.common.data_types import FloatTensorType

from skl2onnx.algebra.onnx_ops import OnnxReduceMax

from cpyquickhelper.numbers import measure_time

from tqdm import tqdm

from mlprodict.testing.experimental_c_impl.experimental_c import code_optimisation

print(code_optimisation())

Out:

AVX-omp=8

ReduceMax implementations#

try:

from tensorflow.math import reduce_max as tf_reduce_max

from tensorflow import convert_to_tensor

except ImportError:

tf_reduce_max = None

try:

from torch import max as torch_max, from_numpy

except ImportError:

torch_max = None

def build_ort_reducemax(axes, op_version=14): # opset=13, 14, ...

node = OnnxReduceMax('x', axes=axes, op_version=op_version,

output_names=['z'])

onx = node.to_onnx(inputs=[('x', FloatTensorType())],

target_opset=op_version)

sess = InferenceSession(onx.SerializeToString())

return lambda x, y: sess.run(None, {'x': x})

def loop_fct(fct, xs, ys):

for x, y in zip(xs, ys):

fct(x, y)

def benchmark_op(axes, repeat=2, number=5, name="ReduceMax", shape_fct=None):

if shape_fct is None:

def shape_fct(dim):

return (3, dim, 1, 128, 64)

ort_fct = build_ort_reducemax(axes)

res = []

for dim in tqdm([8, 16, 32, 64, 100, 128, 200,

256, 400, 512, 1024]):

shape = shape_fct(dim)

n_arrays = 10 if dim < 512 else 4

xs = [numpy.random.rand(*shape).astype(numpy.float32)

for _ in range(n_arrays)]

ys = [numpy.array(axes, dtype=numpy.int64)

for _ in range(n_arrays)]

info = dict(axes=axes, shape=shape)

# numpy

ctx = dict(

xs=xs, ys=ys,

fct=lambda x, y: numpy.amax(x, tuple(y)),

loop_fct=loop_fct)

obs = measure_time(

"loop_fct(fct, xs, ys)",

div_by_number=True, context=ctx, repeat=repeat, number=number)

obs['dim'] = dim

obs['fct'] = 'numpy'

obs.update(info)

res.append(obs)

# onnxruntime

ctx['fct'] = ort_fct

obs = measure_time(

"loop_fct(fct, xs, ys)",

div_by_number=True, context=ctx, repeat=repeat, number=number)

obs['dim'] = dim

obs['fct'] = 'ort'

obs.update(info)

res.append(obs)

if tf_reduce_max is not None:

# tensorflow

ctx['fct'] = tf_reduce_max

ctx['xs'] = [convert_to_tensor(x) for x in xs]

ctx['ys'] = ys

obs = measure_time(

"loop_fct(fct, xs, ys)",

div_by_number=True, context=ctx, repeat=repeat, number=number)

obs['dim'] = dim

obs['fct'] = 'tf'

obs.update(info)

res.append(obs)

if torch_max is not None:

def torch_max1(x, y):

return torch_max(x, y[0])

def torch_max2(x, y):

return torch_max(torch_max(x, y[1])[0], y[0])[0]

# torch

ctx['fct'] = torch_max1 if len(axes) == 1 else torch_max2

ctx['xs'] = [from_numpy(x) for x in xs]

ctx['ys'] = ys # [from_numpy(y) for y in ys]

obs = measure_time(

"loop_fct(fct, xs, ys)",

div_by_number=True, context=ctx, repeat=repeat, number=number)

obs['dim'] = dim

obs['fct'] = 'torch'

obs.update(info)

res.append(obs)

# Dataframes

shape_name = str(shape).replace(str(dim), "N")

df = pandas.DataFrame(res)

df.columns = [_.replace('dim', 'N') for _ in df.columns]

piv = df.pivot('N', 'fct', 'average')

rs = piv.copy()

for c in ['ort', 'torch', 'tf', 'tf_copy']:

if c in rs.columns:

rs[c] = rs['numpy'] / rs[c]

rs['numpy'] = 1.

# Graphs.

fig, ax = plt.subplots(1, 2, figsize=(12, 4))

piv.plot(logx=True, logy=True, ax=ax[0],

title="%s benchmark\n%r - %r"

" lower better" % (name, shape_name, axes))

ax[0].legend(prop={"size": 9})

rs.plot(logx=True, logy=True, ax=ax[1],

title="%s Speedup, baseline=numpy\n%r - %r"

" higher better" % (name, shape_name, axes))

ax[1].plot([min(rs.index), max(rs.index)], [0.5, 0.5], 'g--')

ax[1].plot([min(rs.index), max(rs.index)], [2., 2.], 'g--')

ax[1].legend(prop={"size": 9})

return df, rs, ax

dfs = []

Reduction on a particular case KR#

Consecutive axis not reduced and consecutive reduced axis are merged. KR means kept axis - reduced axis

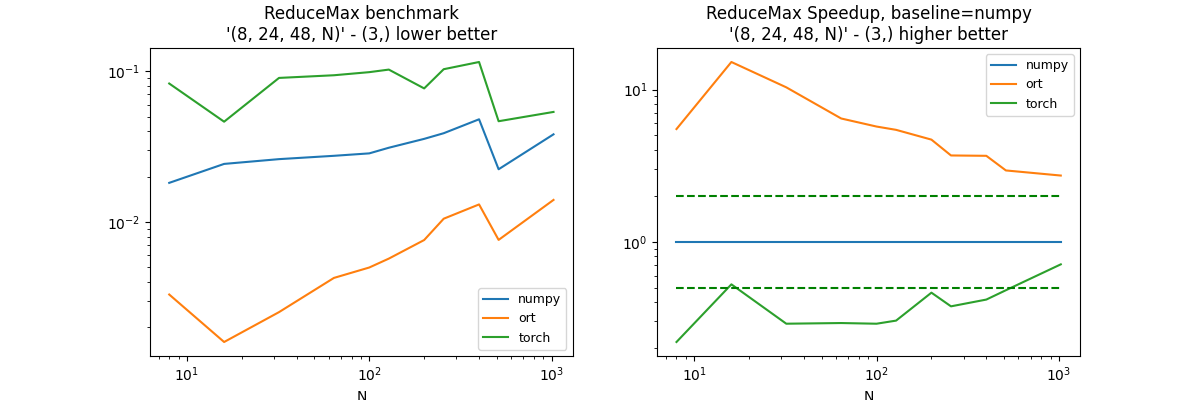

(8, 24, 48, N), axis=(3, )#

axes = (3, )

df, piv, ax = benchmark_op(axes, shape_fct=lambda dim: (8, 24, 48, dim))

dfs.append(df)

df.pivot("fct", "N", "average")

Out:

0%| | 0/11 [00:00<?, ?it/s]

9%|9 | 1/11 [00:01<00:10, 1.09s/it]

18%|#8 | 2/11 [00:01<00:08, 1.11it/s]

27%|##7 | 3/11 [00:03<00:08, 1.08s/it]

36%|###6 | 4/11 [00:04<00:08, 1.23s/it]

45%|####5 | 5/11 [00:06<00:08, 1.37s/it]

55%|#####4 | 6/11 [00:08<00:07, 1.51s/it]

64%|######3 | 7/11 [00:09<00:06, 1.61s/it]

73%|#######2 | 8/11 [00:12<00:05, 1.83s/it]

82%|########1 | 9/11 [00:15<00:04, 2.18s/it]

91%|######### | 10/11 [00:16<00:01, 1.94s/it]

100%|##########| 11/11 [00:18<00:00, 2.05s/it]

100%|##########| 11/11 [00:18<00:00, 1.71s/it]

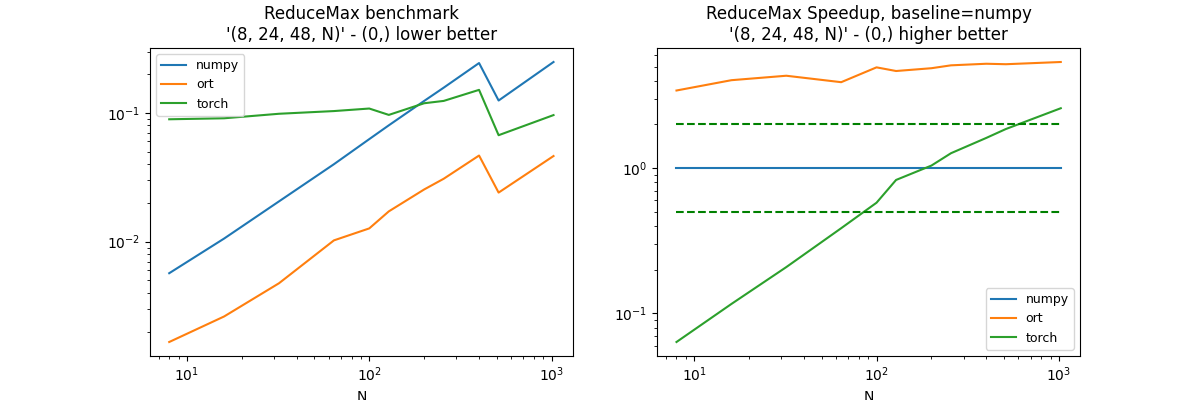

Reduction on a particular case RK#

Consecutive axis not reduced and consecutive reduced axis are merged. RK means reduced axis - kept axis,

(8, 24, 48, N), axis=(0, )#

axes = (0, )

df, piv, ax = benchmark_op(axes, shape_fct=lambda dim: (8, 24, 48, dim))

dfs.append(df)

df.pivot("fct", "N", "average")

Out:

0%| | 0/11 [00:00<?, ?it/s]

9%|9 | 1/11 [00:00<00:09, 1.01it/s]

18%|#8 | 2/11 [00:02<00:09, 1.05s/it]

27%|##7 | 3/11 [00:03<00:09, 1.18s/it]

36%|###6 | 4/11 [00:05<00:09, 1.40s/it]

45%|####5 | 5/11 [00:07<00:10, 1.67s/it]

55%|#####4 | 6/11 [00:09<00:09, 1.89s/it]

64%|######3 | 7/11 [00:12<00:09, 2.35s/it]

73%|#######2 | 8/11 [00:16<00:08, 2.84s/it]

82%|########1 | 9/11 [00:22<00:07, 3.71s/it]

91%|######### | 10/11 [00:25<00:03, 3.42s/it]

100%|##########| 11/11 [00:30<00:00, 3.95s/it]

100%|##########| 11/11 [00:30<00:00, 2.76s/it]

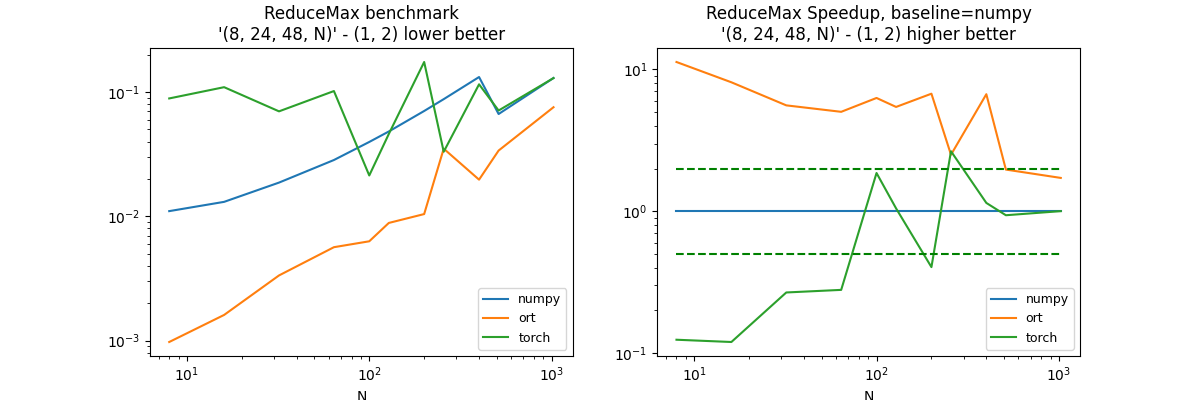

Reduction on a particular case KRK#

Consecutive axis not reduced and consecutive reduced axis are merged. KRK means kept axis - reduced axis - kept axis,

(8, 24, 48, N), axis=(1, 2)#

axes = (1, 2)

df, piv, ax = benchmark_op(axes, shape_fct=lambda dim: (8, 24, 48, dim))

dfs.append(df)

df.pivot("fct", "N", "average")

Out:

0%| | 0/11 [00:00<?, ?it/s]

9%|9 | 1/11 [00:01<00:10, 1.04s/it]

18%|#8 | 2/11 [00:02<00:10, 1.19s/it]

27%|##7 | 3/11 [00:03<00:08, 1.11s/it]

36%|###6 | 4/11 [00:04<00:09, 1.29s/it]

45%|####5 | 5/11 [00:05<00:07, 1.18s/it]

55%|#####4 | 6/11 [00:07<00:06, 1.26s/it]

64%|######3 | 7/11 [00:10<00:07, 1.88s/it]

73%|#######2 | 8/11 [00:12<00:06, 2.03s/it]

82%|########1 | 9/11 [00:16<00:05, 2.61s/it]

91%|######### | 10/11 [00:19<00:02, 2.52s/it]

100%|##########| 11/11 [00:23<00:00, 3.15s/it]

100%|##########| 11/11 [00:23<00:00, 2.15s/it]

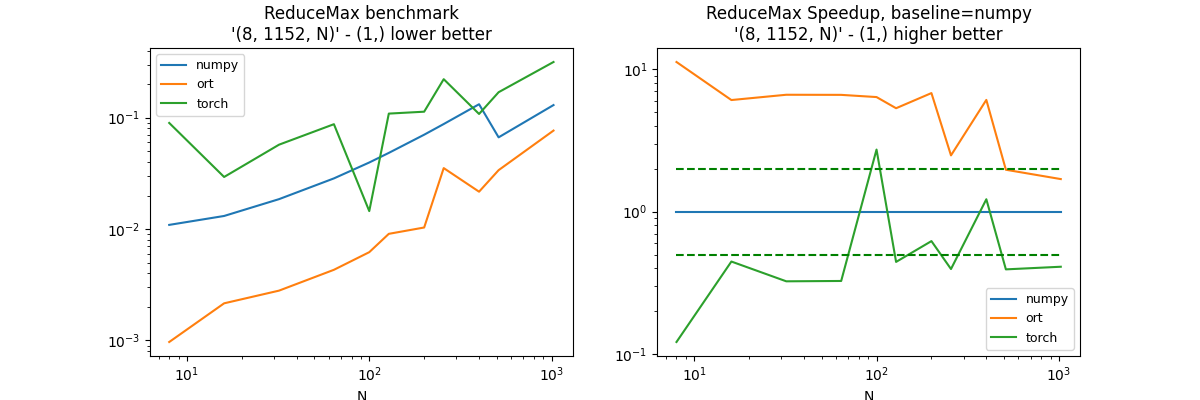

(8, 24 * 48, N), axis=1#

axes = (1, )

df, piv, ax = benchmark_op(axes, shape_fct=lambda dim: (8, 24 * 48, dim))

dfs.append(df)

df.pivot("fct", "N", "average")

Out:

0%| | 0/11 [00:00<?, ?it/s]

9%|9 | 1/11 [00:01<00:10, 1.05s/it]

18%|#8 | 2/11 [00:01<00:06, 1.38it/s]

27%|##7 | 3/11 [00:02<00:06, 1.25it/s]

36%|###6 | 4/11 [00:03<00:07, 1.04s/it]

45%|####5 | 5/11 [00:04<00:05, 1.01it/s]

55%|#####4 | 6/11 [00:06<00:06, 1.35s/it]

64%|######3 | 7/11 [00:09<00:06, 1.74s/it]

73%|#######2 | 8/11 [00:13<00:07, 2.53s/it]

82%|########1 | 9/11 [00:17<00:05, 2.93s/it]

91%|######### | 10/11 [00:20<00:03, 3.05s/it]

100%|##########| 11/11 [00:27<00:00, 4.09s/it]

100%|##########| 11/11 [00:27<00:00, 2.47s/it]

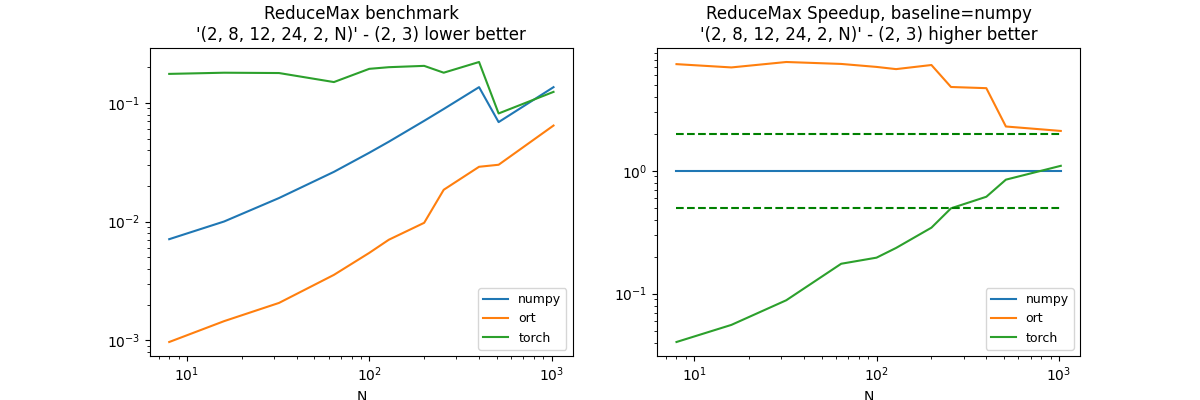

(2, 8, 12, 24, 2, N), axis=(2, 3)#

axes = (2, 3)

df, piv, ax = benchmark_op(axes, shape_fct=lambda dim: (2, 8, 12, 24, 2, dim))

dfs.append(df)

df.pivot("fct", "N", "average")

Out:

0%| | 0/11 [00:00<?, ?it/s]

9%|9 | 1/11 [00:01<00:18, 1.87s/it]

18%|#8 | 2/11 [00:03<00:17, 1.93s/it]

27%|##7 | 3/11 [00:05<00:15, 1.99s/it]

36%|###6 | 4/11 [00:07<00:13, 2.00s/it]

45%|####5 | 5/11 [00:10<00:13, 2.24s/it]

55%|#####4 | 6/11 [00:13<00:12, 2.48s/it]

64%|######3 | 7/11 [00:16<00:11, 2.80s/it]

73%|#######2 | 8/11 [00:20<00:09, 3.07s/it]

82%|########1 | 9/11 [00:25<00:07, 3.70s/it]

91%|######### | 10/11 [00:28<00:03, 3.31s/it]

100%|##########| 11/11 [00:32<00:00, 3.67s/it]

100%|##########| 11/11 [00:32<00:00, 2.97s/it]

Reduction on a particular case RKR#

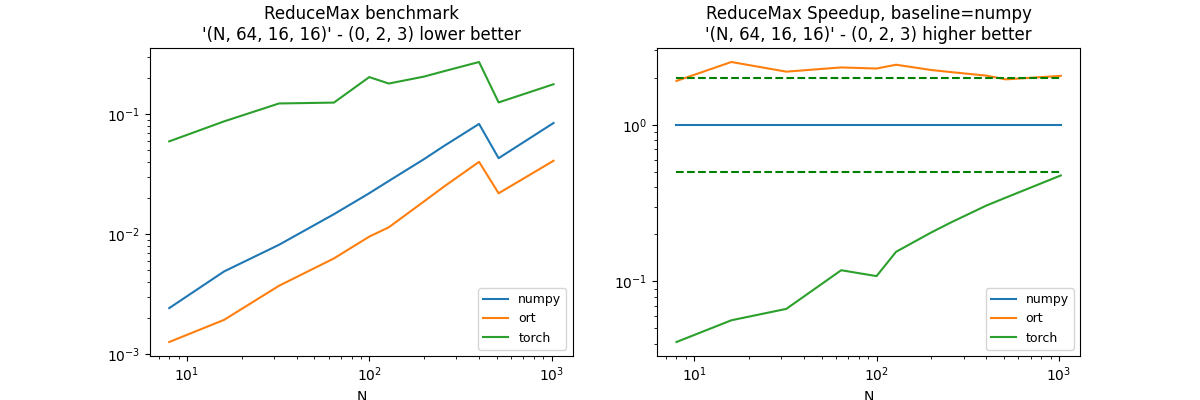

(N, 64, 16, 16), axis=(0, 2, 3)#

axes = (0, 2, 3)

df, piv, ax = benchmark_op(

axes, shape_fct=lambda dim: (dim, 64, 16, 16))

dfs.append(df)

df.pivot("fct", "N", "average")

Out:

0%| | 0/11 [00:00<?, ?it/s]

9%|9 | 1/11 [00:00<00:06, 1.48it/s]

18%|#8 | 2/11 [00:01<00:07, 1.13it/s]

27%|##7 | 3/11 [00:03<00:09, 1.18s/it]

36%|###6 | 4/11 [00:05<00:09, 1.42s/it]

45%|####5 | 5/11 [00:07<00:11, 1.95s/it]

55%|#####4 | 6/11 [00:10<00:11, 2.27s/it]

64%|######3 | 7/11 [00:14<00:10, 2.75s/it]

73%|#######2 | 8/11 [00:18<00:09, 3.28s/it]

82%|########1 | 9/11 [00:25<00:08, 4.16s/it]

91%|######### | 10/11 [00:28<00:03, 3.80s/it]

100%|##########| 11/11 [00:33<00:00, 4.23s/it]

100%|##########| 11/11 [00:33<00:00, 3.02s/it]

Reduction on a particular case RKRK#

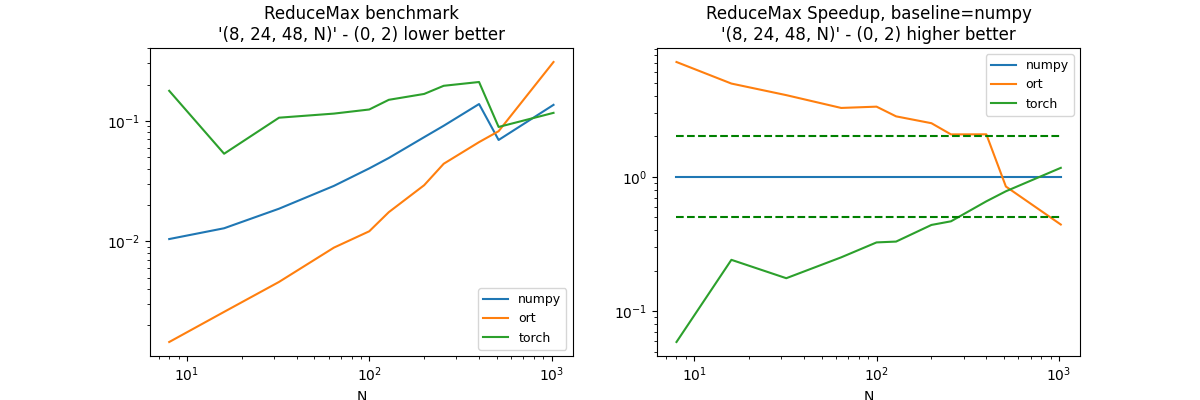

(8, 24, 48, N), axis=(0, 2)#

axes = (0, 2)

df, piv, ax = benchmark_op(axes, shape_fct=lambda dim: (8, 24, 48, dim))

dfs.append(df)

df.pivot("fct", "N", "average")

Out:

0%| | 0/11 [00:00<?, ?it/s]

9%|9 | 1/11 [00:01<00:19, 1.92s/it]

18%|#8 | 2/11 [00:02<00:11, 1.23s/it]

27%|##7 | 3/11 [00:04<00:10, 1.31s/it]

36%|###6 | 4/11 [00:05<00:10, 1.47s/it]

45%|####5 | 5/11 [00:07<00:10, 1.69s/it]

55%|#####4 | 6/11 [00:10<00:09, 1.98s/it]

64%|######3 | 7/11 [00:13<00:09, 2.41s/it]

73%|#######2 | 8/11 [00:17<00:08, 2.94s/it]

82%|########1 | 9/11 [00:23<00:07, 3.69s/it]

91%|######### | 10/11 [00:26<00:03, 3.49s/it]

100%|##########| 11/11 [00:32<00:00, 4.51s/it]

100%|##########| 11/11 [00:32<00:00, 3.00s/it]

Conclusion#

Some of the configurations should be investigated. l-reducesum-problem1. The reduction on tensorflow in one dimension seems to be lazy.

merged = pandas.concat(dfs)

name = "reducemax"

merged.to_csv("plot_%s.csv" % name, index=False)

merged.to_excel("plot_%s.xlsx" % name, index=False)

plt.savefig("plot_%s.png" % name)

plt.show()

Total running time of the script: ( 3 minutes 36.816 seconds)