Note

Click here to download the full example code

Compares implementations of Tranpose#

This example compares the numpy.transpose from numpy, to onnxruntime implementation. If available, tensorflow and pytorch are included as well.

Available optimisation#

The code shows which parallelisation optimisation could be used, AVX or SSE and the number of available processors. Both numpy and torch have lazy implementations, the function switches dimensions and strides but does not move any data. That’s why function contiguous was called in both cases.

import numpy

import pandas

import matplotlib.pyplot as plt

from onnxruntime import InferenceSession

from skl2onnx.common.data_types import FloatTensorType

from skl2onnx.algebra.onnx_ops import OnnxTranspose

from cpyquickhelper.numbers import measure_time

from tqdm import tqdm

from mlprodict.testing.experimental_c_impl.experimental_c import code_optimisation

print(code_optimisation())

Out:

AVX-omp=8

Transpose implementations#

Function einsum is used from tensorflow and pytorch instead of transpose. The equation reflects the required transposition.

try:

from tensorflow import transpose as tf_transpose, convert_to_tensor

except ImportError:

tf_transpose = None

try:

from torch import einsum as torch_einsum, from_numpy

except ImportError:

torch_einsum = None

def build_ort_transpose(perm, op_version=12):

node = OnnxTranspose('x', perm=perm, op_version=op_version,

output_names=['z'])

onx = node.to_onnx(inputs=[('x', FloatTensorType())],

target_opset=op_version)

sess = InferenceSession(onx.SerializeToString())

return lambda x, y: sess.run(None, {'x': x})

def loop_fct(fct, xs, ys):

for x, y in zip(xs, ys):

fct(x, y)

def perm2eq(perm):

first = "".join(chr(97 + i) for i in range(len(perm)))

second = "".join(first[p] for p in perm)

return "%s->%s" % (first, second)

def benchmark_op(perm, repeat=5, number=5, name="Transpose", shape_fct=None):

if shape_fct is None:

def shape_fct(dim): return (3, dim, 1, 512)

ort_fct = build_ort_transpose(perm)

res = []

for dim in tqdm([8, 16, 32, 64, 100, 128, 200,

256, 400, 512, 1024]):

shape = shape_fct(dim)

n_arrays = 10 if dim < 512 else 4

xs = [numpy.random.rand(*shape).astype(numpy.float32)

for _ in range(n_arrays)]

ys = [perm for _ in range(n_arrays)]

equation = perm2eq(perm)

info = dict(perm=perm, shape=shape)

# numpy

ctx = dict(

xs=xs, ys=ys,

fct=lambda x, y: numpy.ascontiguousarray(numpy.transpose(x, y)),

loop_fct=loop_fct)

obs = measure_time(

"loop_fct(fct, xs, ys)",

div_by_number=True, context=ctx, repeat=repeat, number=number)

obs['dim'] = dim

obs['fct'] = 'numpy'

obs.update(info)

res.append(obs)

# onnxruntime

ctx['fct'] = ort_fct

obs = measure_time(

"loop_fct(fct, xs, ys)",

div_by_number=True, context=ctx, repeat=repeat, number=number)

obs['dim'] = dim

obs['fct'] = 'ort'

obs.update(info)

res.append(obs)

if tf_transpose is not None:

# tensorflow

ctx['fct'] = tf_transpose

ctx['xs'] = [convert_to_tensor(x) for x in xs]

ctx['ys'] = [convert_to_tensor(y) for y in ys]

obs = measure_time(

"loop_fct(fct, xs, ys)",

div_by_number=True, context=ctx, repeat=repeat, number=number)

obs['dim'] = dim

obs['fct'] = 'tf'

obs.update(info)

res.append(obs)

# tensorflow with copy

ctx['fct'] = lambda x, y: tf_transpose(

convert_to_tensor(x)).numpy()

ctx['xs'] = xs

ctx['ys'] = ys

obs = measure_time(

"loop_fct(fct, xs, ys)",

div_by_number=True, context=ctx, repeat=repeat, number=number)

obs['dim'] = dim

obs['fct'] = 'tf_copy'

obs.update(info)

res.append(obs)

if torch_einsum is not None:

# torch

ctx['fct'] = lambda x, y: torch_einsum(equation, x).contiguous()

ctx['xs'] = [from_numpy(x) for x in xs]

ctx['ys'] = ys # [from_numpy(y) for y in ys]

obs = measure_time(

"loop_fct(fct, xs, ys)",

div_by_number=True, context=ctx, repeat=repeat, number=number)

obs['dim'] = dim

obs['fct'] = 'torch'

obs.update(info)

res.append(obs)

# Dataframes

shape_name = str(shape).replace(str(dim), "N")

df = pandas.DataFrame(res)

df.columns = [_.replace('dim', 'N') for _ in df.columns]

piv = df.pivot('N', 'fct', 'average')

rs = piv.copy()

for c in ['ort', 'torch', 'tf', 'tf_copy']:

if c in rs.columns:

rs[c] = rs['numpy'] / rs[c]

rs['numpy'] = 1.

# Graphs.

fig, ax = plt.subplots(1, 2, figsize=(12, 4))

piv.plot(logx=True, logy=True, ax=ax[0],

title="%s benchmark\n%r - %r - %s"

" lower better" % (name, shape_name, perm, equation))

ax[0].legend(prop={"size": 9})

rs.plot(logx=True, logy=True, ax=ax[1],

title="%s Speedup, baseline=numpy\n%r - %r - %s"

" higher better" % (name, shape_name, perm, equation))

ax[1].plot([min(rs.index), max(rs.index)], [0.5, 0.5], 'g--')

ax[1].plot([min(rs.index), max(rs.index)], [2., 2.], 'g--')

ax[1].legend(prop={"size": 9})

return df, rs, ax

dfs = []

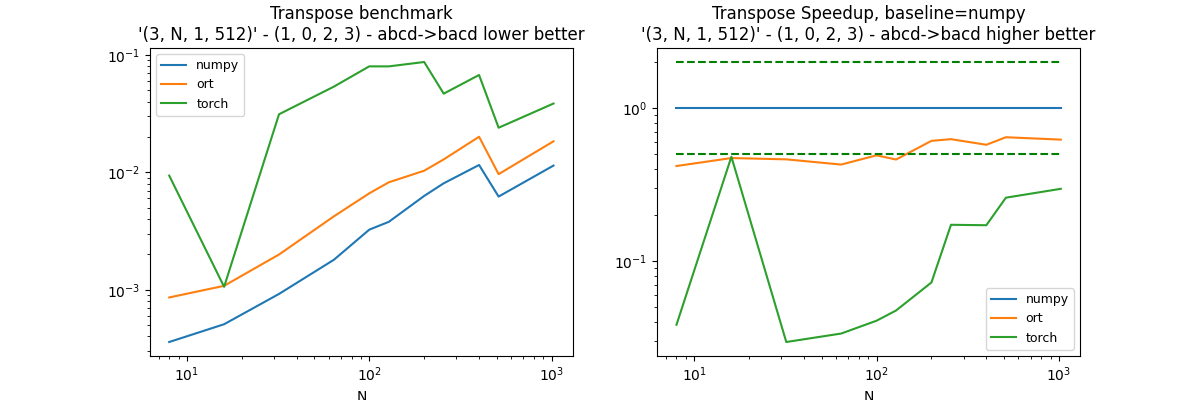

First permutation: (1, 0, 2, 3)#

perm = (1, 0, 2, 3)

df, piv, ax = benchmark_op(perm)

dfs.append(df)

df.pivot("fct", "N", "average")

Out:

0%| | 0/11 [00:00<?, ?it/s]

9%|9 | 1/11 [00:00<00:02, 3.67it/s]

27%|##7 | 3/11 [00:01<00:03, 2.36it/s]

36%|###6 | 4/11 [00:02<00:05, 1.24it/s]

45%|####5 | 5/11 [00:05<00:07, 1.30s/it]

55%|#####4 | 6/11 [00:07<00:08, 1.65s/it]

64%|######3 | 7/11 [00:10<00:07, 1.98s/it]

73%|#######2 | 8/11 [00:11<00:05, 1.93s/it]

82%|########1 | 9/11 [00:14<00:04, 2.16s/it]

91%|######### | 10/11 [00:15<00:01, 1.84s/it]

100%|##########| 11/11 [00:17<00:00, 1.86s/it]

100%|##########| 11/11 [00:17<00:00, 1.61s/it]

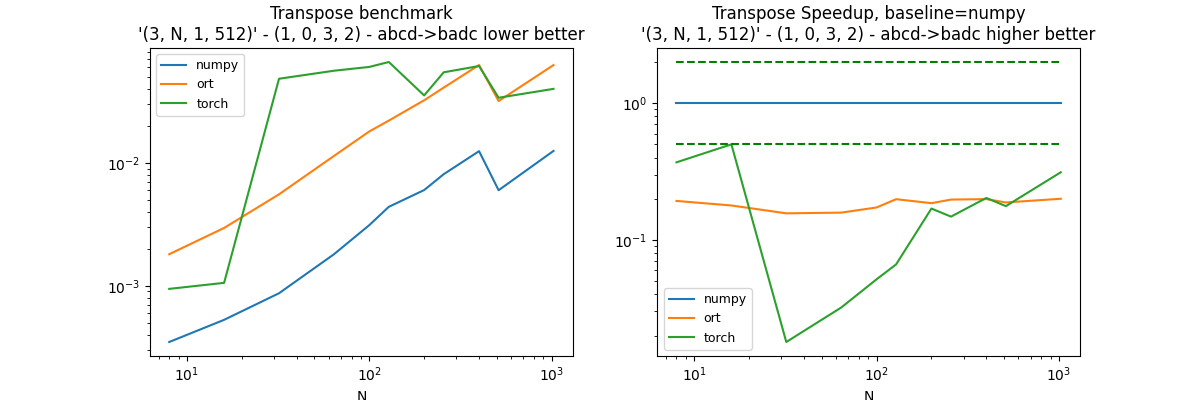

Second permutation: (0, 1, 3, 2)#

perm = (1, 0, 3, 2)

df, piv, ax = benchmark_op(perm)

dfs.append(df)

df.pivot("fct", "N", "average")

Out:

0%| | 0/11 [00:00<?, ?it/s]

18%|#8 | 2/11 [00:00<00:00, 9.50it/s]

27%|##7 | 3/11 [00:01<00:05, 1.56it/s]

36%|###6 | 4/11 [00:03<00:07, 1.07s/it]

45%|####5 | 5/11 [00:05<00:08, 1.42s/it]

55%|#####4 | 6/11 [00:07<00:08, 1.75s/it]

64%|######3 | 7/11 [00:09<00:07, 1.81s/it]

73%|#######2 | 8/11 [00:12<00:06, 2.10s/it]

82%|########1 | 9/11 [00:16<00:05, 2.58s/it]

91%|######### | 10/11 [00:18<00:02, 2.37s/it]

100%|##########| 11/11 [00:21<00:00, 2.59s/it]

100%|##########| 11/11 [00:21<00:00, 1.93s/it]

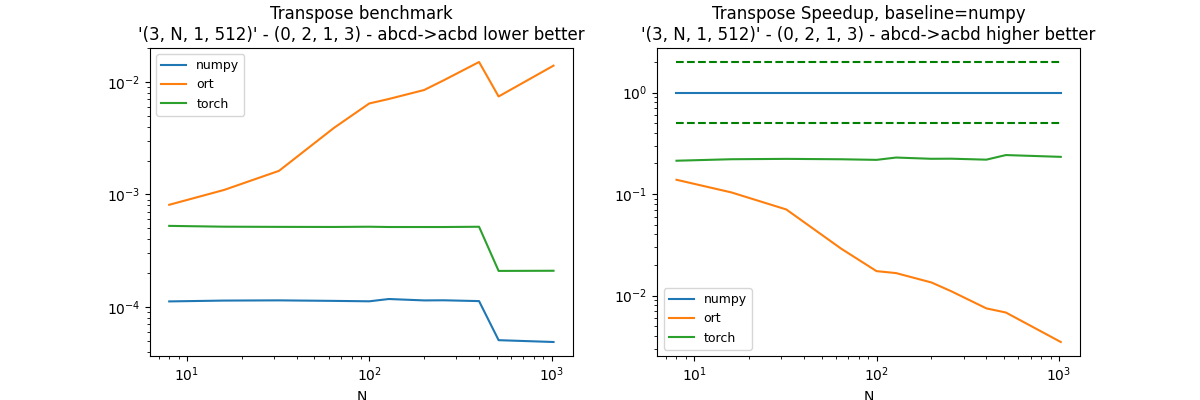

Third permutation: (0, 2, 1, 3)#

This transposition is equivalent to a reshape because it only moves the empty axis. The comparison is entirely fair as the cost for onnxruntime includes a copy from numpy to onnxruntime, a reshape = another copy, than a copy back to numpy. Tensorflow and pytorch seems to have a lazy implementation in this case.

perm = (0, 2, 1, 3)

df, piv, ax = benchmark_op(perm)

dfs.append(df)

df.pivot("fct", "N", "average")

Out:

0%| | 0/11 [00:00<?, ?it/s]

27%|##7 | 3/11 [00:00<00:00, 17.19it/s]

45%|####5 | 5/11 [00:00<00:00, 8.13it/s]

64%|######3 | 7/11 [00:01<00:00, 5.14it/s]

73%|#######2 | 8/11 [00:01<00:00, 4.11it/s]

82%|########1 | 9/11 [00:02<00:00, 3.03it/s]

91%|######### | 10/11 [00:02<00:00, 3.10it/s]

100%|##########| 11/11 [00:03<00:00, 2.58it/s]

100%|##########| 11/11 [00:03<00:00, 3.64it/s]

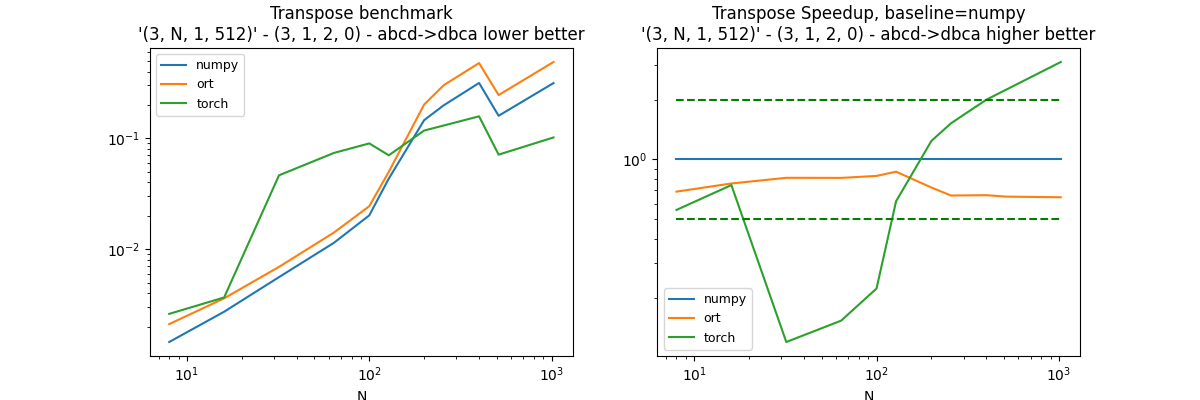

Fourth permutation: (3, 1, 2, 0)#

perm = (3, 1, 2, 0)

df, piv, ax = benchmark_op(perm)

dfs.append(df)

df.pivot("fct", "N", "average")

Out:

0%| | 0/11 [00:00<?, ?it/s]

9%|9 | 1/11 [00:00<00:01, 6.18it/s]

18%|#8 | 2/11 [00:00<00:01, 4.52it/s]

27%|##7 | 3/11 [00:01<00:06, 1.25it/s]

36%|###6 | 4/11 [00:04<00:10, 1.48s/it]

45%|####5 | 5/11 [00:07<00:13, 2.18s/it]

55%|#####4 | 6/11 [00:12<00:14, 2.85s/it]

64%|######3 | 7/11 [00:23<00:23, 5.76s/it]

73%|#######2 | 8/11 [00:39<00:26, 8.98s/it]

82%|########1 | 9/11 [01:03<00:27, 13.67s/it]

91%|######### | 10/11 [01:15<00:13, 13.16s/it]

100%|##########| 11/11 [01:38<00:00, 16.12s/it]

100%|##########| 11/11 [01:38<00:00, 8.95s/it]

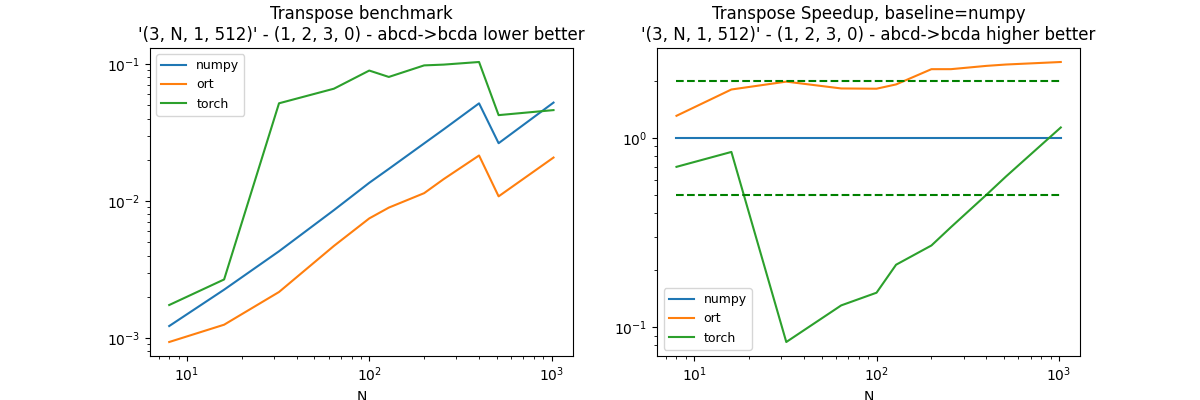

Fifth permutation: (1, 2, 3, 0)#

perm = (1, 2, 3, 0)

df, piv, ax = benchmark_op(perm)

dfs.append(df)

df.pivot("fct", "N", "average")

Out:

0%| | 0/11 [00:00<?, ?it/s]

9%|9 | 1/11 [00:00<00:01, 9.50it/s]

18%|#8 | 2/11 [00:00<00:01, 7.05it/s]

27%|##7 | 3/11 [00:01<00:06, 1.33it/s]

36%|###6 | 4/11 [00:03<00:08, 1.25s/it]

45%|####5 | 5/11 [00:06<00:10, 1.82s/it]

55%|#####4 | 6/11 [00:09<00:10, 2.13s/it]

64%|######3 | 7/11 [00:12<00:10, 2.57s/it]

73%|#######2 | 8/11 [00:16<00:08, 2.97s/it]

82%|########1 | 9/11 [00:21<00:06, 3.48s/it]

91%|######### | 10/11 [00:23<00:03, 3.05s/it]

100%|##########| 11/11 [00:26<00:00, 3.10s/it]

100%|##########| 11/11 [00:26<00:00, 2.41s/it]

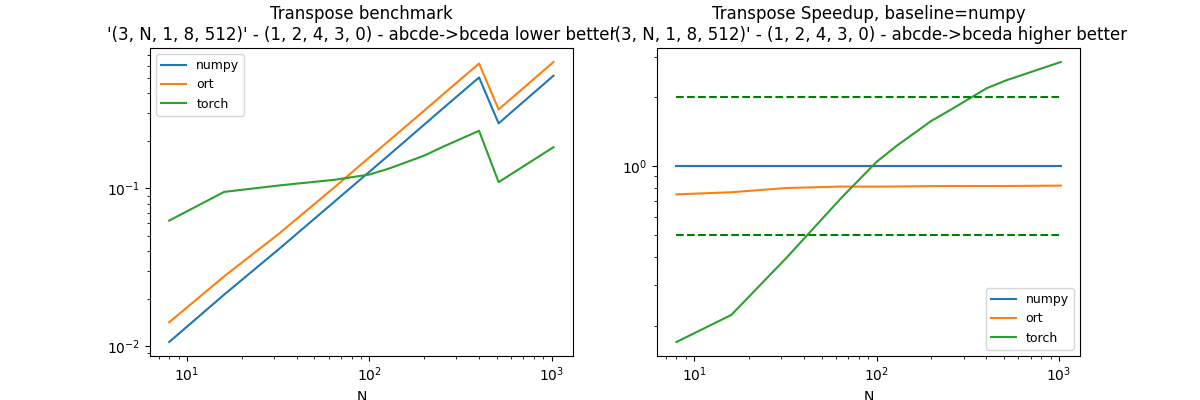

Six th permutation: (1, 2, 4, 3, 0)#

perm = (1, 2, 4, 3, 0)

df, piv, ax = benchmark_op(perm, shape_fct=lambda dim: (3, dim, 1, 8, 512))

dfs.append(df)

df.pivot("fct", "N", "average")

Out:

0%| | 0/11 [00:00<?, ?it/s]

9%|9 | 1/11 [00:02<00:22, 2.22s/it]

18%|#8 | 2/11 [00:05<00:27, 3.07s/it]

27%|##7 | 3/11 [00:10<00:31, 3.98s/it]

36%|###6 | 4/11 [00:18<00:38, 5.44s/it]

45%|####5 | 5/11 [00:29<00:43, 7.29s/it]

55%|#####4 | 6/11 [00:42<00:46, 9.20s/it]

64%|######3 | 7/11 [01:01<00:49, 12.39s/it]

73%|#######2 | 8/11 [01:24<00:47, 15.99s/it]

82%|########1 | 9/11 [02:00<00:44, 22.10s/it]

91%|######### | 10/11 [02:18<00:20, 20.81s/it]

100%|##########| 11/11 [02:53<00:00, 25.15s/it]

100%|##########| 11/11 [02:53<00:00, 15.75s/it]

Conclusion#

All libraries have similar implementations. onnxruntime measures includes 2 mores copies, one to copy from numpy container to onnxruntime container, another one to copy back from onnxruntime container to numpy. Parallelisation should be investigated.

merged = pandas.concat(dfs)

name = "transpose"

merged.to_csv("plot_%s.csv" % name, index=False)

merged.to_excel("plot_%s.xlsx" % name, index=False)

plt.savefig("plot_%s.png" % name)

plt.show()

Total running time of the script: ( 5 minutes 54.786 seconds)